|

|

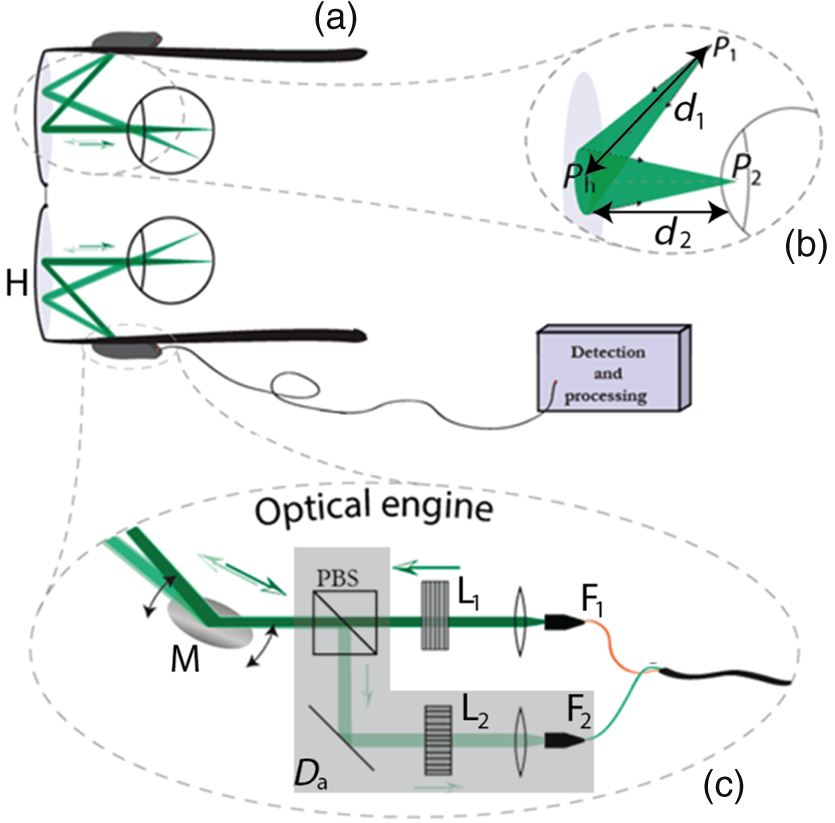

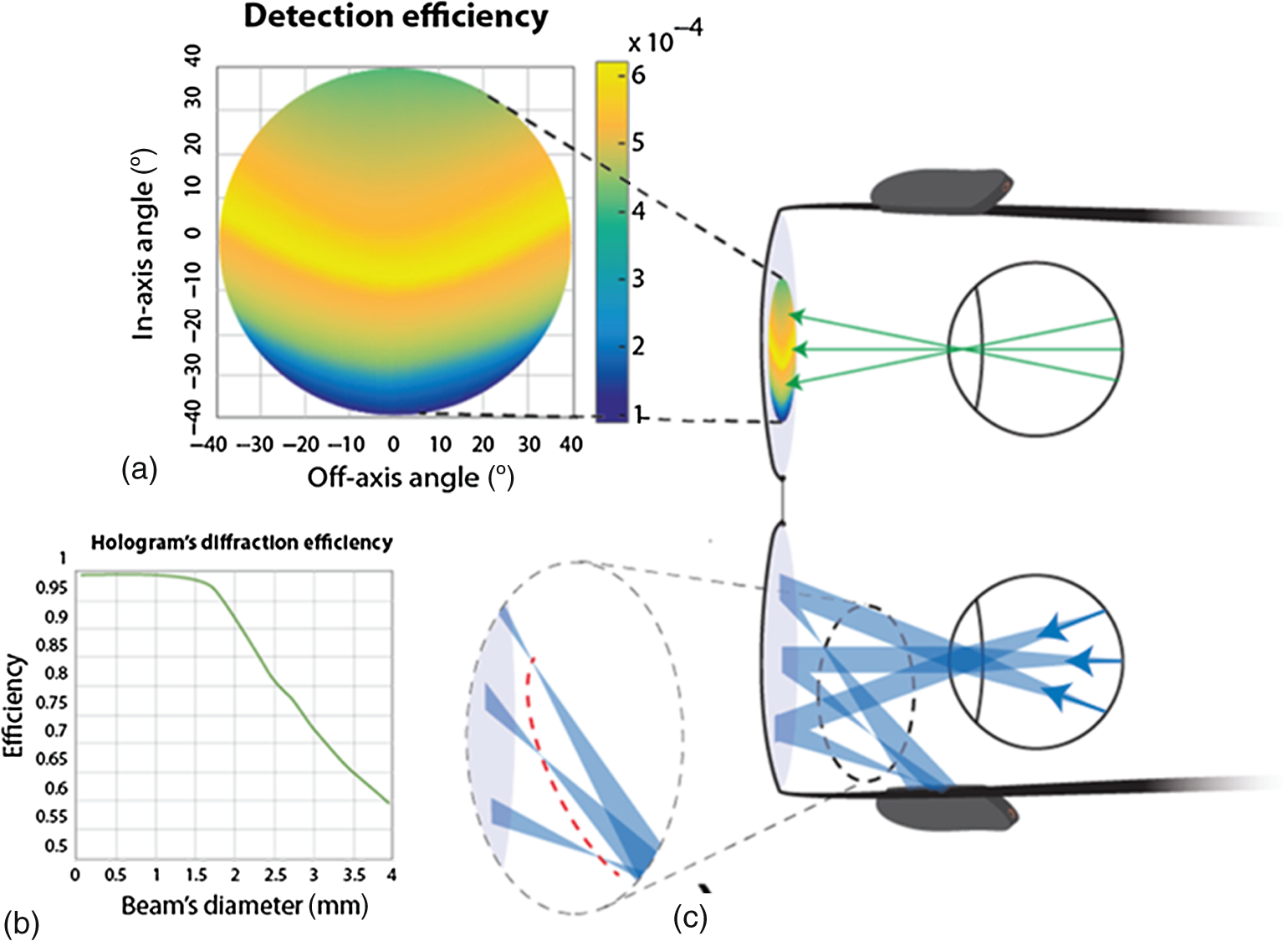

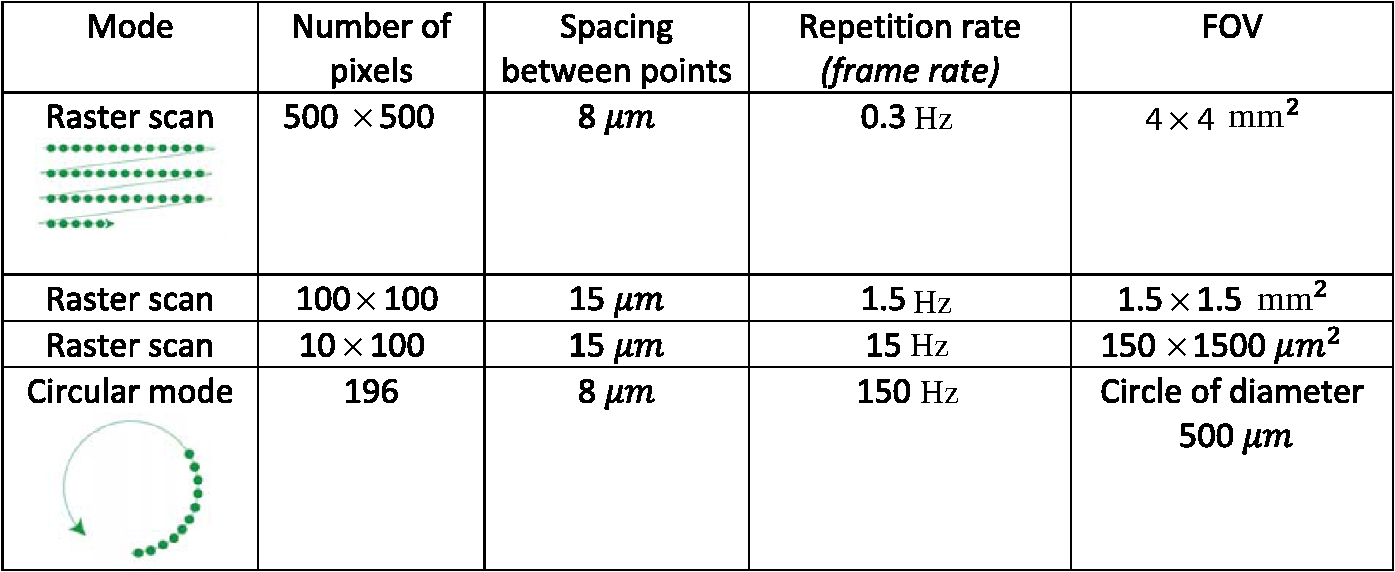

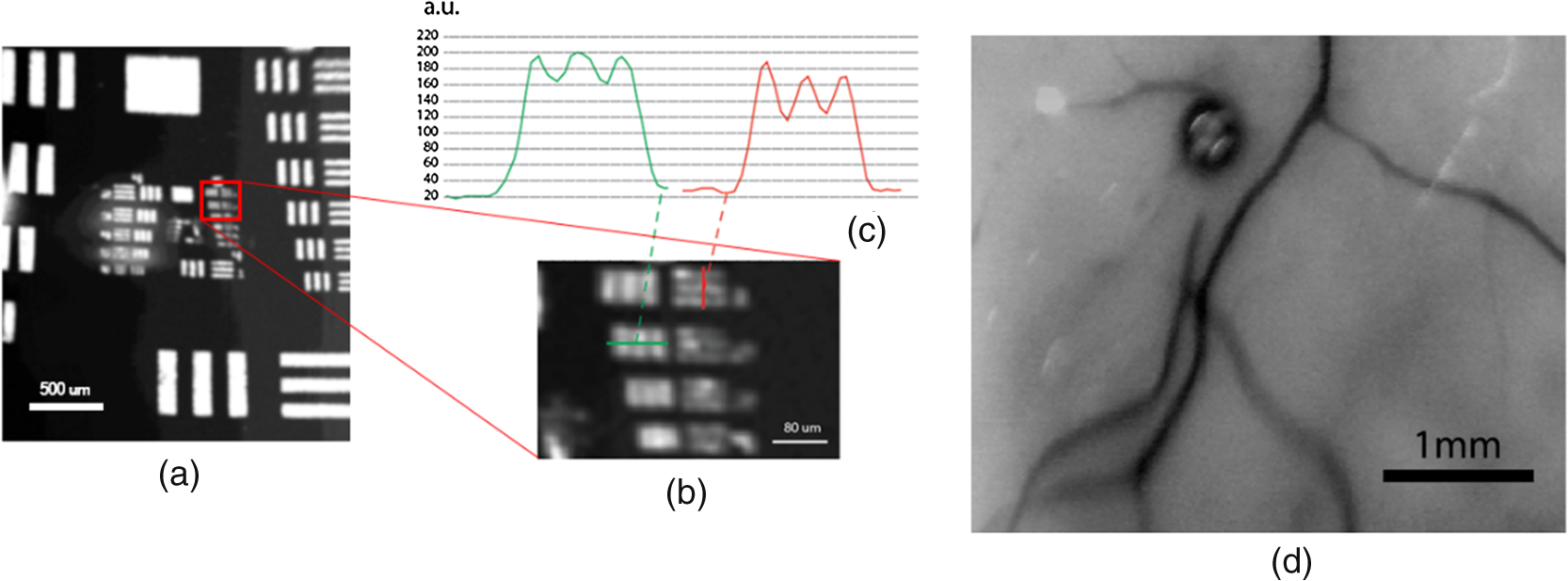

1.IntroductionThe eye is a transparent window with direct visual access to the retina and blood vessels. In addition to allowing detection of eye diseases, several studies have shown that fundus eye imaging provides indirect health information. Molnar et al.1 showed that it is possible to detect cyanide and arsenide poisoning by measuring real-time changes in blood coloration in the eye fundus. Koronyo-Hamaoui et al.2 observed in mice’s retina the presence of -amyloid plaques, which are an Alzheimer’s disease hallmark. Furthermore, the human eye has been used for purposes outside the medical environment, such as personal identification3 and behavior. Indeed, eye movements are used for studying contextual awareness,4 human–computer interaction,5 mental state,6,7 user’s attention,8 and personal taste,9 which has been successfully applied to advertisement.10,11 Acquisition of pictures of the eye fundus has always been performed with bulky instruments, and, only recently, a new class of compact digital ophthalmoscopes has been introduced. Systems, such as EyeQuickTM or iExaminer, use a digital camera (sometimes provided by a smartphone) to acquire a retinal picture with bright-field illumination.12 Other laboratories developed a compact version of a confocal scanning laser ophthalmoscope (cSLO) allowing also for optical coherence tomography.13–15 To avoid movements between the acquisition system and the user, wearable ophthalmoscopes have been developed.16,17 In these devices, the use of a camera for data collection makes the acquisition process faster than point-scanning systems. Wearable technology would be an ideal candidate for extracting parameters, such as body health and eye tracking, but, for such a task, a continuous acquisition system that does not obstruct the user’s field of view (FOV) is required. The feature of nondisturbance of the FOV (in the following called “see-through”) is not present in current ophthalmoscopes, while it is the subject of intense research in the field of mixed reality displays.18,19 One pioneering work in this field was proposed by Tidwell20 in 1995 and further realized as a compact display in the shape of a pair of glasses.21 Tidwell’s display, consisting of a laser beam scanned onto the retina, resembles the structure of the cSLO originally designed by Webb et al.,22,23 so it is the optimal starting point for a see-through wearable ophthalmoscope. In this article, we present the first see-through ophthalmoscope designed for wearable technology. Such a device could be used for chronic real-time retinal measurements (e.g., poisoning monitoring) without interfering with the user’s FOV, as well as for occasional eye measurements, such as for eye tracking. The device’s properties are studied with three-dimensional (3-D) simulations, and its imaging performance has been experimentally verified using resolution targets and ex-vivo human samples. 2.Scheme and Simulations2.1.Optical DesignIn the presented setup (see Fig. 1), the laser beam is brought by a single-mode optical fiber followed by a polarizer. After passing through a polarizing beam splitter (PBS), it is directed onto the micromirror’s surface. Here, the beam is scanned on the hologram, which is then diffracted toward the eye lens and then focused on the retina. At this surface, light scatters back with mixed polarizations and travels back to the PBS. The part of the scattered beam having a polarization perpendicular to the original one is then reflected in a new path. This light is focused into a multimode fiber, which brings the signal to a photodetector. The rejection of light with the same polarization as the source beam suppresses the background noise generated by multiple reflections at the interfaces. Fig. 1(a) Concept for retinal imaging with a wearable, see-through ophthalmoscope. Laser light provided by single-mode fiber is collimated, angularly scanned by a micromirror (M) that illuminates the eye after diffraction from a volume holographic grating (H). Backscattered light from the eye fundus is then confocally collected by fiber and sent to a photodetector for acquisition (box “detection and processing”). (b) The hologram H is made with two spherical waves whose centers are located at the pupil plane () and the micromirror (), respectively. is the distance between the micromirror and the hologram’s center, while is the distance between hologram’s center and the center of the pupil. (c) Expanded view of the optical engine. Light enters the system through the fiber and becomes vertically polarized after passing through the linear polarizer . The backscattered beam from the eye is collected in the detection arm where the horizontally polarized light component is reflected by the PBS, filtered by the polarizer , and collected by the multimode optical fiber . Polarization optics is used to suppress light reflections at interfaces.  The detection system is not included in Tidwell’s original work, and particular care should be dedicated to making it compact for wearability. To keep the eyewear as light as possible, source and detection are kept separate from the optical engine and connected by optical fibers. Indeed, with current technology for lenses, polarizers, and micromirrors, the optical engine can be made compact and light. Sensitive photodetectors are in general more bulky, so we chose to separate them from the eyewear to provide optical connection via optical fibers. Photodetectors have shrunk in size and can be operated with a low-voltage power supply of 5 V (H12402 micro photomultiplier tube Hamamatsu). This is the largest component of the detection system. The detection box can thus be expected to reach the size of a large smartphone (few cubic centimeters), making this box wearable in a pocket. 2.2.Geometrical ConsiderationsThe see-through hologram presented in Fig. 1(b) is recorded in such a way that a diverging beam coming from the micromirror is diffracted toward the pupil’s center. To model imaging, we approximate the hologram as a lens, which maps the plane containing into a plane containing . In this approximation, based on the assumption of a small collimated beam, the magnification between the two planes is given by the ratio , where is the distance between the hologram’s center and the point . This allows us to consider the micromirror as an aperture at the eye pupil’s plane of size , where is the physical micromirror’s diameter. The values of and are limited by wearability constraints to the range of tens of millimeters. The choice of a small value for is appealing since it provides a bigger aperture at the pupil’s plane; thus, more light is collected. Nevertheless, this choice amplifies the difference in path length for different angles of illumination, which contributes to distortion of the final image. We also need to take into account that a bigger pupil diameter will also improve the aberrations introduced by the eye. However, for a healthy eye ( vision), a beam below 2 mm is limited by diffraction, and high-order aberrations can be neglected.24 Another disadvantage in the case of a big pupil is that the hologram is designed for a small beam diameter, making the diffraction efficiency decrease rapidly when the impinging beam becomes large [Fig. 2(d)]. Fig. 2(a) Light detection efficiency for different areas of the hologram. As expected, there is higher angular selectivity for in-plane than out-of-plane rays. (b) Hologram’s diffraction efficiency for different beam sizes. The efficiency is almost constant up to 1.5 mm and starts a rapid decrease after this value. (c) Focusing effect of the hologram: the dashed red line is the place where the focal points lay. Their different distances to the micromirror is the main cause for nonuniformity of the collection efficiency illustrated in (a).  The choice of the geometrical parameters affects the maximum achievable FOV. Indeed, in the paraxial approximation, the linear FOV equals , where is the micromirror’s scan range and is the magnification between the pupil plane and the scanning mirror plane. From these considerations and aiming for a flexible setup, we chose to place both pupil and micromirror at 30 mm from the hologram, obtaining and . 2.3.Ray-Tracing SimulationsTo take into account the effect of the hologram on the retinal image resolution, we simulated the system by 3-D ray-tracing using MATLAB®. All lenses (including the eye lens) have been considered in the paraxial approximation, and the curvature of the eye fundus has been included in this study. The eye lens has been modeled with a focal distance of 17 mm,25 and the rays emerging from the eye fundus are generated following Lambert’s cosine law.26 Part of the rays scattered from the retina is stopped by the eye pupil, while the other part is collected by the eye lens and collimated toward the hologram. Here, the hologram diffraction efficiency has been obtained using Kogelnik’s theory,27 while the direction of the diffracted ray is estimated from Heifez et al.28 Beam diffraction is such that the beam is focused at a distance , which is different for each spatial location on the hologram [Fig. 2(c)]. After the focal point, the beam diverges and only a fraction of it is collected by the micromirror. The collected light is then collimated by the scan lens (not shown in Fig. 1) and focused by a lens [Fig. 1(c)] on the pinhole, which in our case is a multimode fiber. Spatial resolution is estimated by computing the light collection from different retinal points without changing the micromirror’s angle. We obtain a lateral resolution of and an axial resolution of using a pinhole of 1 Airy unit. Axial resolution is greater than the retina thickness (); hence, confocal detection does not provide depth selection of the retina layers. However, the targeted applications are based on two-dimensional (2-D) imaging on the fundus, so depth selectivity is not required. This simulation has been performed only at the center of the eye, and it should be repeated for different illumination directions. The experimental results (see Sec. 3) showed a similar value for lateral resolution across the entire FOV. A diffraction limited spot size on the retina would give a lateral resolution of , and, since all the lenses are considered ideal, the hologram is responsible for this aberration. Due to the system asymmetry, a variation in power collection from different areas of the eye results in a nonuniform reconstructed image. Figure 2(b) shows this effect, showing that selectivity is more sensitive for in-plane beams and almost negligible for out-of-plane beams. The origin of this effect becomes clear by plotting the position of the focal point for different diffracted beams [dashed red line in Fig. 2(c)]. For in-plane rays, the focal distance is different for each source point, resulting in different beam sizes at the micromirror. This produces relevant changes in the amount of collected light. Analyzing the power budget along the return path, we estimate that only 1.4% of scattered light from the retina is collected by a 4-mm eye pupil, which is furthermore decreased to 0.8% due to the hologram’s efficiency. The micromirror further decreases this fraction to 0.17%. Simulations also show that there is no significant contribution of light when the eye pupil exceeds the diameter of , as expected from the discussion in Sec. 2.2. 3.Experimental ResultsTo validate the system, we realized a proof-of-concept setup on a breadboard. Relative distances between the micromirror, the hologram, and the eye are chosen according to Sec. 2.2 and are equal to a wearable setup. Miniaturization has not been attempted on the other components in the detection path. The micromirror chosen for the setup is a 2-D microelectromechanical (MEM) scanning mirror by Hamamatsu (S13124-01M) with a maximum scan angle of , 2-mm diameter of reflective area, and resonant frequencies of 1000 and 350 Hz on the two axes. The system can be used either in resonance or linear mode. Resonance mode allows for high line frequency, which improves the frame rate but at the cost of a loss of control on the mirror’s position overtime. This means that the data from the scan are acquired, but it is not possible to place each value at the right pixel. Linear mode requires a scanning frequency smaller than the resonance frequency, allowing for a complete control of the micromirror point by point. As a general rule, the smaller the scan frequency, the higher the control on the micromirror positioning, even if some specific frequency could still excite resonance modes introducing image distortions. After testing the micromirror, we found 150 Hz to be a good value for line frequency. The frame rate can be obtained as the scanning frequency divided by the number of lines. Because of this, a trade-off should be found between FOV and frame rate. Figure 3 shows different scanning speeds for obtaining either a large FOV or a fast scan. However, it is clear that faster scanning is limited up to , which is still too slow for eye tracking. Fig. 3Comparison among different scanning techniques. To prevent distortions due to resonance, the line frequency has been fixed to 150 Hz. The scanning mode can be raster scanning or circular mode. The number of pixels, together with the spacing among adjacent points, determines the FOV.  To obtain a faster scan, circular scanning has been shown to be a valid solution.29 In this technique, the laser is scanned following a circle shape, which provides a linear profile. This technique is appropriate for feature tracking, but it cannot be used for imaging. The software provided with the micromirror also supports circular scanning. An important consideration is the maximum achievable FOV obtainable with this micromirror. The maximum scan angle of the MEM is , which corresponds to an FOV of 20 deg, and an area on the retina of . However, for angles close to the maximum, the micromirror undergoes nonlinear effects, which show up as distortions in the final image. Because of that, we preferred to limit the FOV to a maximum of , which is large enough to track retinal veins in the eyes. The hologram is fabricated by interference using a 532-nm single frequency laser (model MSL FN 532 from CNI), which illuminates a thin photopolymer layer. The refractive index changes proportionally to the light intensity. The laser light is split into two beams (reference and object beam). The reference beam is a spherical wave centered in that propagates up to the photopolymer. The object beam is also a spherical beam that travels from the photopolymer to its focal point . Photopolymer exposure generates a change in the index of refraction of 0.03. The photopolymer is a film of Bayfol® HX200 by Covestro. The photodiode used for acquisition is an APD410A2/M by Thorlabs. This scheme has been used with a USAF chart for testing the system’s resolution and with an ex-vivo eye sample. The human eye has been fixed with a solution of paraformaldehyde and cut in half to remove the eye lens. This last step was required because the ex-vivo eye lens does not present the correct focal distance, probably due to after death process and fixation effects. Figure 4(a) has been obtained with a USAF resolution target and shows a horizontal resolution of and a vertical resolution of with a picture of . The image was acquired in . Different acquisition speeds have been tested for the micromirror without noticing any significant decrease in the image properties as long as the scanning frequency is not close to the resonant frequency. For high-speed acquisition near resonance, image distortions are present due to excitation of the mirror’s resonant modes. Fig. 4(a) Image of a USAF resolution target with the see-through system of Fig. 1, with (b) zoomed area. (c) Intensity cross section of the resolvable features. (d) Image of an ex-vivo human retinal sample. It is possible to clearly distinguish some retinal blood vessels. The black spot is supposed to be an air bubble due to sample degradation. All the images have been acquired with a single scan. No average has been performed.  Eye safety needs to be taken into account considering also the case of a failure of the scanning mirror. In the case of visible light, we can calculate the maximum exposure for a time of 0.25 s, which is the eye reaction time. For infrared radiation, this is not possible anymore because the user is not able to see the radiation and the exposure time could be arbitrary long. However, many possible techniques can be implemented to check the correct functioning of the device, such as a feedback system or a control on the acquired data. As such, we estimated a maximum exposure time of 10 s for infrared. From these considerations, we obtain a limit of for a 515-nm beam and for an 800-nm radiation.30 An image of the human eye has been acquired with an FOV of about . With an incident signal power of at 532 nm, we estimate that the ratio between the signal and the shot noise contribution is smaller than at the detector (photodiode APD410A2/M from Thorlabs); thus, they are negligible. All the images have been renormalized following the intensity inhomogeneity presented in Fig. 2(b). The obtained image quality allows us to observe blood vessels and features around the optic disc. 4.Conclusion and Further DevelopmentsWe have presented the proof of concept of the first see-through ophthalmoscope. The system has been designed for long-term acquisition without disturbing the user’s activity for applications, such as health monitoring and eye tracking. The optical engine has been realized in a compact structure using the same sizes and distances of a wearable setup. The measured imaging resolution is , in agreement with 3-D simulations. The system is not diffraction limited because of distortions caused by the hologram. A image of the retina of an ex-vivo eye sample was obtained in 3 s. The acquisition speed in the current work was not optimized. The use of a resonant scanning mirror will deeply improve the acquisition speed, making the system suitable for in-vivo applications. Acquired images prove vasculature discrimination, allowing for the previously suggested applications. The polymeric material used in this article is sensitive only to wavelengths in the visible range, but if a complete disturbance-free device is needed an infrared source should be chosen. DisclosuresNo conflict of interest needs to be declared. We also confirm that the work is original, and it is not currently submitted to any other journal. ReferencesL. R. Molnar et al.,

“Ocular scanning instrumentation: rapid diagnosis of chemical threat agent exposure,”

Proc. SPIE, 5403 60

(2004). http://dx.doi.org/10.1117/12.546589 PSISDG 0277-786X Google Scholar

M. Koronyo-Hamaoui et al.,

“Identification of amyloid plaques in ex vivo retinas of Alzheimer’s patients and noninvasive in vivo imaging of retinal plaques in the mouse model,”

NeuroImage, 54

(Suppl. 1), S204

–S2017

(2011). http://dx.doi.org/10.1016/j.neuroimage.2010.06.020 NEIMEF 1053-8119 Google Scholar

C. Simon and I. Goldstein,

“A new scientific method of identification,”

N. Y. State J. Med., 35

(18), 901

–906

(1935). NYSJAM 0028-7628 Google Scholar

L. T. Cheng and J. Robinson,

“Personal contextual awareness through visual focus,”

IEEE Intell. Syst., 16

(3), 16

–20

(2001). http://dx.doi.org/10.1109/5254.940021 Google Scholar

M. Dorr, L. Pomarjanschi and E. Barth,

“Gaze beats mouse: a case study on a gaze-controlled breakout,”

PsychNology J., 7

(2), 197

–211

(2009). Google Scholar

J. Lemos et al.,

“Measuring emotions using eye tracking,”

in Proc. of Measuring Behavior,

226

(2008). Google Scholar

M. Vidal et al.,

“Wearable eye tracking for mental health monitoring,”

Comput. Commun., 35 1306

–1311

(2012). http://dx.doi.org/10.1016/j.comcom.2011.11.002 COCOD7 0140-3664 Google Scholar

M. I. Posner and S. Petersen,

“The attention system of the human brain,”

Annu. Rev. Neurosci., 13 25

–42

(1990). http://dx.doi.org/10.1146/annurev.ne.13.030190.000325 ARNSD5 0147-006X Google Scholar

L. Zhang et al.,

“It starts with iGaze: visual attention driven networking with smart glasses,”

in Proc. of the 20th Annual Int. Conf. on Mobile Computing and Networking (MobiCom 2014,

91

–102

(2014). Google Scholar

C. E. Velazquez and K. E. Pasch,

“Attention to food and beverage advertisements as measured by eye-tracking technology and the food preferences and choices of youth,”

J. Acad. Nutr. Dietetics, 114

(4), 578

–582

(2014). http://dx.doi.org/10.1016/j.jand.2013.09.030 Google Scholar

Tobiipro, “Tobii Pro Glasses 2,”

(2017) https://www.tobiipro.com/product-listing/tobii-pro-glasses-2/ ( May ). 2017). Google Scholar

A. Russo et al.,

“A novel device to exploit the smartphone camera for fundus photography,”

J. Ophthalmol., 2015 1

–5

(2015). http://dx.doi.org/10.1155/2015/823139 Google Scholar

M. Fiedewald, S. Wawrzyniak and F. Pallas,

“Ein Beitrag zum datenschutzfreundlichen Entwurf biometrischer systeme,”

Datenschutz und Datensicherheit, 38

(7), 482

–486

(2014). http://dx.doi.org/10.1007/s11623-014-0211-9 Google Scholar

F. La Rocca et al.,

“In vivo cellular-resolution retinal imaging in infants and children using an ultracompact handheld probe,”

Nat. Photonics, 10 580

–584

(2016). http://dx.doi.org/10.1038/nphoton.2016.141 NPAHBY 1749-4885 Google Scholar

N. D. Shemonski et al.,

“Computational high-resolution optical imaging of the living human retina,”

Nat. Photonics, 9 440

–443

(2015). http://dx.doi.org/10.1038/nphoton.2015.102 NPAHBY 1749-4885 Google Scholar

E. Lawson et al.,

“Computational retinal imaging via binocular coupling and indirect illumination,”

in ACM SIGGRAPH,

51

(2012). Google Scholar

A. Samaniego et al.,

“mobileVision: a face-mounted, voice-activated, non-mydriatic ‘lucky’ ophthalmoscope,”

in Proc. of the Wireless Health 2014, Association for Computing Machinery,

(2014). Google Scholar

J. Orolosky,

“Adaptive display of virtual content for improving usability and safety in mixed and augmented reality,”

Osaka University,

(2016). Google Scholar

O. Cackmakci and J. Rolland,

“Head-worn displays: a review,”

J. Displ. Technol., 2

(3), 199

–216

(2006). http://dx.doi.org/10.1109/JDT.2006.879846 IJDTAL 1551-319X Google Scholar

M. Tidwell et al.,

“The virtual retinal display—a retinal scanning imaging system,”

in Proc. of Virtual Reality World (IDG 1995),

325

–333

(1995). Google Scholar

M. Guillaumée et al.,

“Curved transflective holographic screens for head-mounted display,”

Proc. SPIE, 8643 864306

(2013). http://dx.doi.org/10.1117/12.2000642 PSISDG 0277-786X Google Scholar

R. H. Webb, G. W. Hughes and F. C. Delori,

“Confocal scanning laser ophthalmoscope,”

Appl. Opt., 26

(8), 1492

–1499

(1987). http://dx.doi.org/10.1364/AO.26.001492 APOPAI 0003-6935 Google Scholar

R. H. Webb, G. W. Hughes and O. Pomerantzeff,

“Flying spot TV ophthalmoscope,”

Appl. Opt., 19

(17), 2991

–2997

(1980). http://dx.doi.org/10.1364/AO.19.002991 APOPAI 0003-6935 Google Scholar

F. W. Campbell and R. W. Gulbisch,

“Optical quality of the human eye,”

J. Physiol., 186

(3), 558

–578

(1966). http://dx.doi.org/10.1113/jphysiol.1966.sp008056 JPHYA7 0022-3751 Google Scholar

R. Serway and R. Beichner, Physics for Scientists and Engineers with Modern Physics, 5th ed.Saunders College Publishing, San Diego, California

(2000). Google Scholar

W. J. Smith, Modern Optical Engineering, 4th ed.McGraw-Hill, London, Great Britain

(2007). Google Scholar

H. Kogelnik,

“Coupled wave theory for thick hologram gratings,”

Bell Labs Tech. J., 48

(9), 2909

–2947

(1969). http://dx.doi.org/10.1002/bltj.1969.48.issue-9 Google Scholar

A. Heifez et al.,

“Angular directivity of diffracted wave in Bragg-mismatched readout of volume holographic gratings,”

Opt. Commun., 280 311

–316

(2007). http://dx.doi.org/10.1016/j.optcom.2007.08.047 OPCOB8 0030-4018 Google Scholar

D. X. Hammer et al.,

“Adaptive optics scanning laser ophthalmoscope for stabilized retinal imaging,”

Opt. Express, 14 3354

–3367

(2006). http://dx.doi.org/10.1364/OE.14.003354 OPEXFF 1094-4087 Google Scholar

American National Standard for Safe Use of Lasers, Orlando, Florida

(2014). Google Scholar

BiographyDino Carpentras is a PhD student in photonics at Polytechnic of Lausanne (EPFL), Switzerland. He is currently studying new techniques for retinal imaging in the lab of Professor C. Moser. His current works include the development of a see-through ophthalmoscope and imaging of phase objects in the human retina. For his master’s thesis, he worked on lab on a disc technology under the guidance of Professor M. Madou at the University of California, Irvine. Christophe Moser Christophe Moser is currently associate professor of optics in the Microengineering Department at EPFL. He obtained his PhD at the California Institute of Technology in optical information processing in 2000. He cofounded and was the CEO of Ondax Inc., Monrovia, California, USA, for 10 years before joining EPFL in 2010. His interests are analog and digital holography for imaging, ultracompact endoscopy through fibers, 3-D endoprinting, and optics for solar concentration. |