|

|

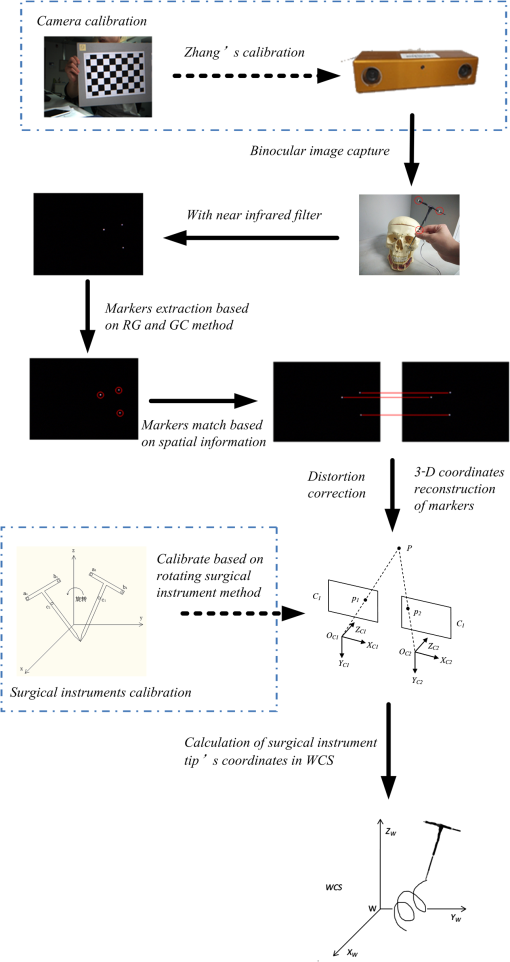

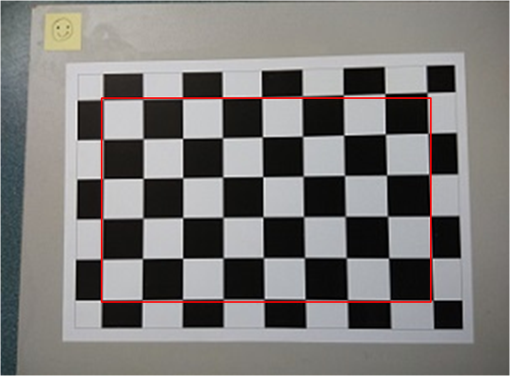

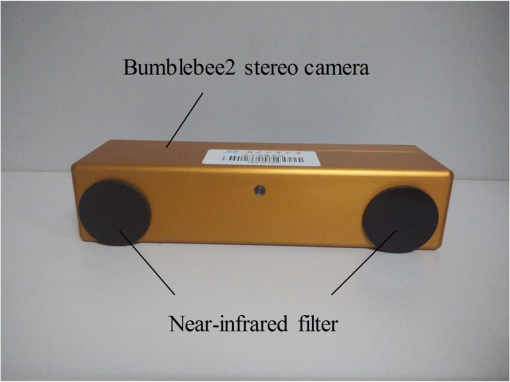

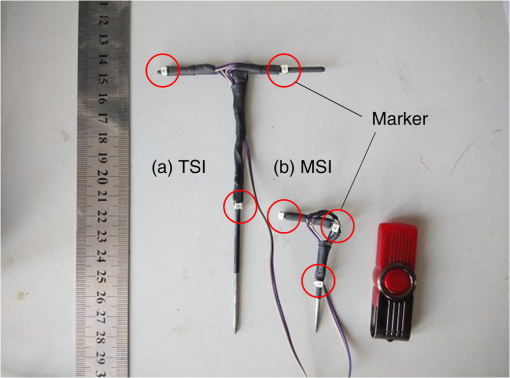

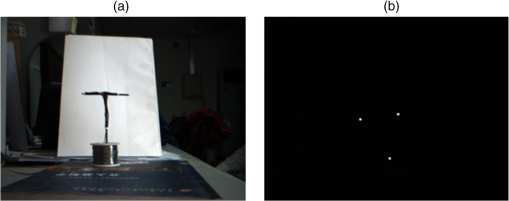

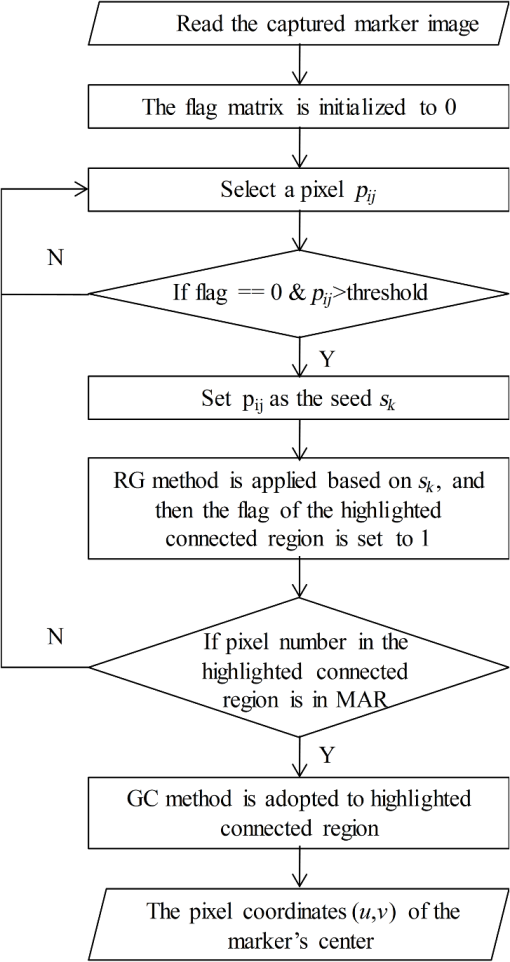

1.IntroductionAn image-guided surgical navigation system breaks through the limits of the traditional surgical operation and extends the doctors’ limited vision scope.1,2 Tracking and positioning are the key techniques of an image-guided surgical navigation system. The accuracy of the tracking method directly determines the accuracy of the image-guided surgical navigation system. An optical tracking system is designed, using light-emitting markers, combined with self-developed image processing methods and an easy-to-implement three-dimensional (3-D) space tracking algorithm, which achieves the high tracking accuracy of surgical instruments. The tracking methods most widely adopted in image-guided surgical navigation systems include articulated arms, active or passive optical tracking systems, sonic digitizers, and electromagnetic sensors.2–5 Nowadays, the most promising tracking methods are optical and electromagnetic tracking. However, compared with optical tracking, electromagnetic navigation has less precision and its magnetic field is prone to distortion by large metal objects which bring measurement errors to the system.3,6 The commercial optical tracking systems currently used in surgical navigation products mainly include micron tracker (Claron Technology Inc., Canada),7 polaris optical tracking systems (Northern Digital Inc., Canada),8 StealthStation system (Medtronic Inc., United States),9 etc. Unfortunately, these products are always expensive and they are not convenient for further development and customization due to proprietary techniques. Based upon stereotactic surgery principles, this paper proposes an optical tracking system with low cost and high precision. In the proposed optical tracking system, three optical LEDs are used as the markers that are installed on the surgical instrument. This system uses the Bumblebee2 binocular camera to capture the images of markers. To eliminate the interference of ambient light, a near-infrared filter is added in front of the camera lens. To extract the pixel coordinate of a marker’s center, the region growing (RG) method is used to eliminate the singular point and then the gray centroid (GC) method is adopted to obtain the pixel coordinate of the marker’s center. This image processing method greatly enhances the antijamming capability and improves the accuracy of the system. Next, the algorithm matches the markers in the left and right camera images based on the sequence of its spatial positions. As a last step, it derives the coordinates of the surgical instrument tip by 3-D coordinates reconstruction of markers and realizes the tracking system. Through simulation, the accuracy and stability of system has been verified and can meet the needs of surgical navigation. 2.Methods2.1.FrameworkThe framework of the proposed optical tracking system, which includes the main and auxiliary processes, is shown in Fig. 1. The auxiliary processes consist of the camera calibration and surgical instruments’ calibration that are denoted within the dotted line. This helps the main process obtain the parameters for calculating the coordinates of the markers and the surgical instrument’s tip. For the main process, the stereo camera first captures the binocular images of the surgical instrument. Next, the markers’ extraction, markers’ matching, distortion correction, and 3-D coordinates’ reconstruction procedures are applied to calculate the world coordinates of markers. Finally, the world coordinates of the surgical instrument’s tip can be deduced by the world coordinates of the markers. 2.2.Camera CalibrationZhang’s calibration method10 is widely used in camera calibration in recent years and is mature. It is a compromise method for traditional camera calibration and self-calibration. This method is robust and it is not necessary to develop a high-precision positioning reference. More importantly, as the calibration accuracy can meet the requirements, Zhang’s method is used to calibrate cameras. The specific calibration tool is the camera calibration toolbox.11 A chessboard is designed as the calibration board; as shown in Fig. 2, the size of each square is . 2.3.Binocular Image CaptureA Bumblebee2 stereo camera12 is the binocular vision acquisition device adopted in this system. As shown in Fig. 3, two near-infrared filters are installed in front of the camera lens to remove the interference of ambient light. According to the practical requirements,2 two kinds of surgical instruments are designed and employed in the proposed system. They are typical surgical instrument (TSI) and miniature surgical instrument (MSI). TSI is suitable for most cases, while the MSI is suitable for surgeries with limited operation space. In the simulation, these two kinds of surgical instruments are tested separately. For each surgical instrument, three 850-nm near-infrared LEDs are installed and used as the markers, as shown in Fig. 4. The luminance of the three markers is captured by the Bumblebee2 camera and the background is completely filtered out by the near-infrared filter. The visual effects of the captured image with and without the near-infrared filter are shown in Fig. 5. From Fig. 5, it can be seen that the ambient light has been effectively filtered out. 2.4.Markers ExtractionIn an optical tracking system, the pixel coordinate of the marker’s center affects the accuracy of the 3-D space coordinates and the coordinate of the surgical instrument tip directly. In the captured images, the markers are the highlighted regions. The pixel coordinate of the marker’s center is the center of the highlighted region and they are used to calculate the world coordinate of surgical instrument tip. So, an effective algorithm is proposed to extract the pixel coordinate of marker’s center from the highlighted region. This algorithm is based on RG and GC methods as shown in Fig. 6. First, the RG method is used to seek all highlighted connected regions in the image. In this step, a threshold is used to determine the seed and region growth criterion. Its value is set to half of the maximum pixel in the proposed experience. In order to avoid duplication of the seed search, a flag matrix is adopted. Next, the highlighted connected region is compared with the range of the marker’s area (MAR) to determine whether it is noise or the marker. The noise of the CCD sensor is inevitable.13 Noise is usually scattered and is shown as small highlighted regions whose areas are much smaller than the marker in the captured image. Through the pre-experiment, the MAR is set as: [11,74] if the area of a highlighted region is less than 11, it is considered to be a noise. Finally, the GC method is applied to calculate the pixel coordinate of the marker’s center. The equation for the GC method is where is the ’th pixel coordinate in the markers’ area, whose gray value is , and is the derived pixel coordinate of the marker’s center.The procedures of the markers’ extraction algorithm are as follows:

2.5.Markers MatchingThe proposed optical tracking system is based on a binocular camera. According to the model for 3-D coordinates reconstruction of markers (Sec. 2.6), the spatial positions of a pair of matched markers are required. There are three markers in each of the left and right images, respectively. The marker pairs at the same location in the left and right images must be matched before 3-D reconstruction. The spatial positions of markers are used for matching. The positions of the markers on the surgical instrument are fixed, so the positions of the markers on the image are also fixed. The three markers in the left and right images are sorted according to their positions from top to bottom and left to right. Next, the marker pairs can be matched by matching their sorted order number. 2.6.Three-Dimensional Coordinates Reconstruction of MarkersThe mathematical model14,15 of the camera is as follows, which establishes the relationship between the pixel coordinate of the marker’s center and its 3-D spatial world coordinates where is the pixel coordinate of the marker’s center, are the coordinates of markers in the camera coordinate system, and are the coordinates of markers in the world coordinate system (WCS), respectively. and are the internal and the external parameters of the camera that can be obtained by camera calibration.Two equations of Eq. (2) can be established for the binocular stereo camera, as shown in Eq. (3). The world coordinates of markers can be derived from 2.7.Distortion CorrectionThe pinhole imaging mathematical model used in this paper is an ideal linear mathematical model. In the process of manufacturing and installation, camera lens distortion is inevitable.16 In order to eliminate the influence of lens distortion on the positioning accuracy, the lens distortion must be corrected. According to the camera model based on Heikkilä and Silvén,17 the distortion model adopted in this paper is as where , are the actual and the ideal pixel coordinates of a marker’s center, . , , and are the radial distortion coefficients, and , are the centrifugal distortion coefficients.To verify the effect of the camera lens distortion correction module in the proposed optical tracking system, an experiment is carried out. Three kinds of distances, which are short, medium, and long, are measured by the proposed tracing system. For each kind of distance, the endpoints’ coordinates are measured using the distortion correction model and the nondistortion correction model. Each distance is measured 50 times and the average value is calculated. The actual distance is measured by using a Vernier caliper. The distances measured by the tracking system are compared with the actual distances. The error is the difference between them as shown in Table 1. Table 1Comparison of the results of distortion correction and nondistortion correction.

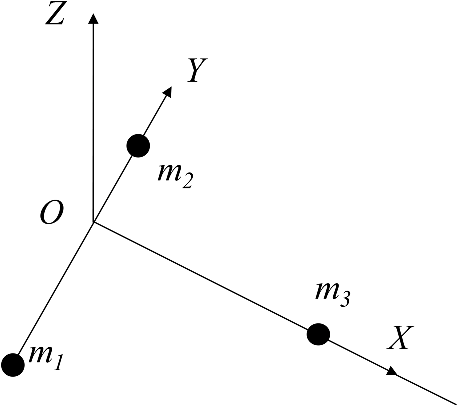

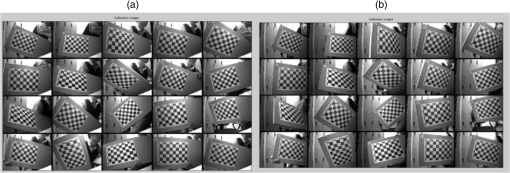

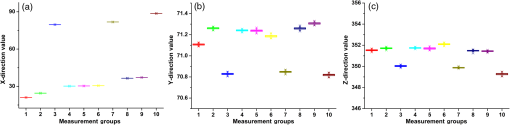

From Table 1, it can be seen that the distortion correction errors are smaller than the nondistortion correction error. With the increasing of distance, the distortion correction error becomes smaller. 2.8.Surgical Instrument CalibrationThe purpose of optical tracking is to obtain the world coordinates of the surgical instrument’s tip. Through 3-D reconstruction, the world coordinates of the markers can be obtained. According to the shape of a surgical instrument, a surgical instrument coordinate system (SICS) can be established, as shown in Fig. 7. The coordinates of the surgical instrument tip in SICS are , and in WCS. The rotation matrix and the translation matrix of SICS with respect to WCS are and , then and can be calculated by markers’ coordinates , , and (Ref. 18) where the coordinates of are numerically equal to .The purpose of the surgical instrument calibration is to get the coordinates of . Equations (5)–(7) are used to calculate the world coordinates of the surgical instrument tip by markers’ world coordinates. The rotating surgical instrument method16 is used to get . The idea is to fix the position of the surgical instrument tip and rotate the surgical instrument. Thus, the same marker on the surgical instrument satisfies the spherical equation [Eq. (8)], in which the coordinates of a surgical instrument tip are the center of the sphere. The world coordinates of the surgical instrument tip can be obtained by solving Eq. (10). Assuming images are obtained by rotating the surgical instrument, the coordinate of the ’th marker and in the ’th image in the WCS is defined as . The world coordinates of the tip are denoted as . Under the rigid body constraint, the distance between and is Eq. (8) has equations. For each , using the ’th () equation minus the ()’th equation, the following equation can be obtained Eq. (9) has equations, which can be expressed in a matrix form Among them, is an row by three columns of the matrix and is the vectors. When , Eq. (10) is an over determined equation, and the least square method can be used to solve .In this paper, the calibration is carried out by 20 rotating surgical instruments. The ’th rotation matrix and the translation matrix of the SISC relative to the WCS are and , and the tip coordinate can be calculate by 3.Simulation ResultsIn order to verify and determine the stability and accuracy of the system, the stability, space distance, and rotation tests were carried out for TSI and MSI. The stability experiment is designed to measure the system stability under the condition of repeated measurement. The accuracy of the system is measured by a space distance experiment. The absolute error is compared by the results of calculated distance between two points and a Vernier caliper. Furthermore, the stability and accuracy of the tip coordinates are tested by a rotation experiment at different angles. 3.1.Calibration SettingsTwenty pairs of the left and right images of the calibration board are captured at different locations as shown in Fig. 8. Internal and external parameters of camera calibration of each camera are , and , , respectively Fig. 8Camera calibration images (a) left camera calibration images and (b) right camera calibration images.  The left camera’s optical center is used as the origin of the WCS. In this system, is a fixed value, as shown 3.2.Stability TestWhen the optical tracking system is working, the system must have sufficient stability. If the surgical instrument remains still, the calculated tip coordinates may change due to the changes in the capture environment and the stability of the algorithm. In order to test the stability of the system, 10 different position points are selected randomly and the surgical instrument is kept still in each position. For each position, 100 sets of images are used for repeating. Then, the standard deviations (SD) of -, -, and -directions are calculated, respectively, which are used to evaluate the stability of the system. Two kinds of surgical instruments are tested in the experiment. The results are shown in Table 2. Figure 9 shows another representation of Table 2 for MSI. Table 2The coordinates stability results for TSI and MSI.

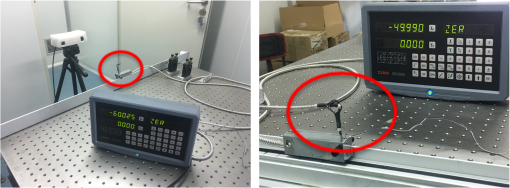

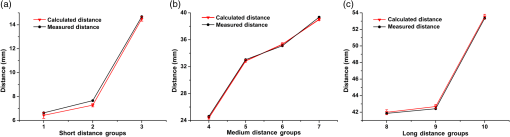

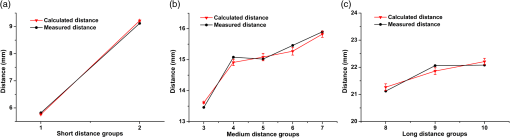

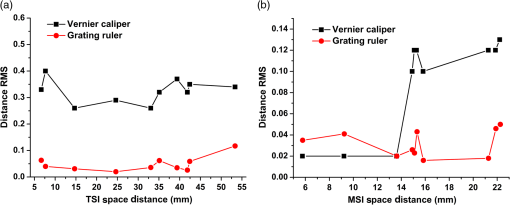

Fig. 9The 3-D coordinate stability results for MSI. (a) Results of the -direction measurement, the range of -direction SD is from 0.01003 to 0.01677, and the range of -direction CV is from 0.012% to 0.068%; (b) results of the -direction measurement, the range of -direction SD is from 0.00787 to 0.01148, and the range of -direction CV is from 0.011% to 0.016%; and (c) results of the -direction measurement, the range of -direction SD is from 0.05100 to 0.09452, and the range of -direction CV is from 0.014% to 0.027%.  From the above results, it can be seen that the average SDs of the -, -, and -directions in 10 positions are 0.01799, 0.00783, 0.08112 mm for TSI and 0.01289, 0.00942, 0.06751 mm for MSI, respectively. In Table 2, the worst results are 0.09708 and 0.09452 mm for two different instruments. From Fig. 8, it also can be seen that the range of the coordinate is about (45, 75 mm), the range of the coordinate is about (150, 153 mm), and the range of the coordinate is about (330, 390 mm). As a result, it looks like that the SD of the -direction is bigger than the other two directions, but the coefficient of variations (CVs) of the three directions from Fig. 9 are almost the same range. 3.3.Space Distance TestSince the absolute coordinates obtained by the optical tracking system are in the WCS, it is difficult to directly evaluate the accuracy of the world coordinates. Therefore, in order to test the position accuracy of the simulated surgical instruments, this system adopts the method of indirect measurement. Within the scope of the effective field of the optical tracking system, the tip coordinates in the two places are randomly chosen and the distances are measured. Then, the tracking accuracy of the system is evaluated by comparing the distance with the actual measured distance using a Vernier caliper and grating ruler. Ten groups of different distances were measured, respectively, by Vernier caliper and grating ruler that includes short distance, medium distance, and long distance, and each tip coordinate is measured 50 times. The average absolute errors using the Vernier caliper are shown in Figs. 10 and 11. The average absolute errors of two surgical instruments measured by Vernier caliper are 0.23 and 0.13 mm. Fig. 10Space distance test result of TSI by vernier caliper. (a) Results of the short-distance (0 to 15 mm) measurement, compare the calculated and measured distance, the range of space distance error is from 0.18 to 0.38 mm; (b) results of the medium-distance (20 to 40 mm) measurement, compare the calculated and measured distance, the range of space distance error is from 0.19 to 0.34 mm; and (c) results of the long-distance (40 to 55 mm) measurement, compare the calculated and measured distance, the range of space distance error is from 0.13 to 0.26 mm.  Fig. 11Space distance test result of MSI by vernier caliper. (a) Results of the short-distance (0 to 10 mm) measurement, compare the calculated and measured distance, the range of space distance error is from 0.06 to 0.10 mm; (b) results of the medium-distance (10 to 20 mm) measurement, compare the calculated and measured distance, the range of space distance error is from 0.06 to 0.19 mm; and (c) results of the long-distance (20 to 25 mm) measurement, compare the calculated and measured distance, the range of space distance error is from 0.13 to 0.20 mm.  However, the accuracy results of the proposed optical tracking system have more than four decimal places. To compare with the results of the Vernier caliper, the last several decimal places have been rounded. In order to evaluate the accuracy of the proposed optical tracking system more accurately, we use a more accurate measurement device, a grating ruler. The brand of grating ruler is SION, and the model is KA-300/5U/1020 mm. The “grating ruler” is rigidly fixed on the “precision optical table” to ensure the stability, and then the TSI or MSI is also fixed to the grating ruler, as shown in Figs. 12 and 13. The results measured by Vernier caliper are used as a reference, and the new distance measurement values are determined by using the grating ruler, as shown in Tables 3 and 4, and Fig. 14. From Tables 3 and 4, it can be seen that higher measurement accuracy is obtained by using the grating ruler. The fluctuation range of the distance root mean square error (RMSE) becomes smaller as shown in Fig. 14. Table 3Space distance test results for TSI.

Table 4Space distance test results for MSI.

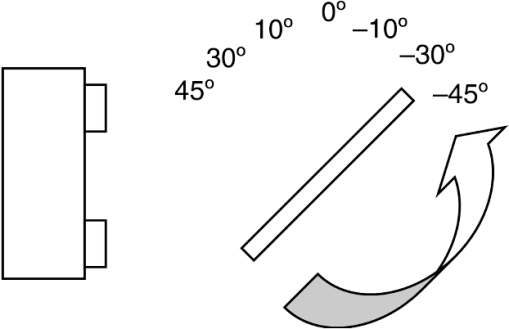

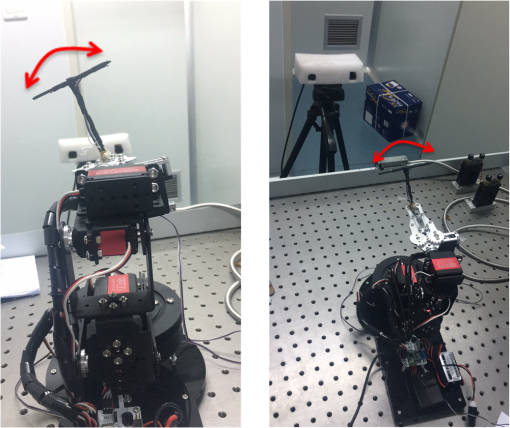

Fig. 14Fluctuation ranges of distance RMSE for TSI and MSI. (a) Fluctuation ranges of distance RMSE for TSI and (b) fluctuation ranges of distance RMSE for MSI.  The average absolute distance error is 0.23 mm for TSI and 0.13 mm for MSI by Vernier caliper, 0.041 mm for TSI and 0.024 mm for MSI by grating ruler, respectively. The distance RMSE is 0.32 mm for TSI and 0.09 mm for MSI by Vernier caliper, 0.049 mm for TSI and 0.032 mm for MSI by grating ruler, respectively. The results show that the proposed optical tracking system has a higher accuracy and it can meet the accuracy requirement of space tracking equipment in surgical navigation. 3.4.Rotation TestIn the rotational experiment, the stability of the rotation of the surgical instrument and the angular accuracy of the rotation angle are tested. When the camera captures the marker images from different angles, the size of the marker in the image is constantly changing. In order to verify that the calculated coordinates are accurate for any capturing angle, a rotating stability experiment is designed. Because the size of the MSI is too small to do the experiment, only the TSI is done for this test. As shown in Fig. 15, the tip of the TSI is fixed. Then, let the TSI rotate from to 45 deg. Every angle is measured 100 times and the values of the tip coordinates are averaged. In this experiment, the tip of the TSI did not move, so its coordinates in different angles are constant in theory. Seven different rotation angles are tested, and the tip coordinates are shown in Table 5. Table 5Coordinates for the TSI tip in different rotation angles.

The SDs of the coordinates of the TSI tip in different rotation angles are 0.08074, 0.28266, and 0.33407 mm in the -, -, and -directions, respectively. From the above data, it can be seen that the coordinates of the tip do not change significantly, which shows that the proposed tracking algorithm is accurate enough to guarantee the coordinates of the TSI tip to be unchanged under different capturing angle conditions. The proposed tracking system has a high rotational stability. In order to get a more accurate rotation angle, TSI or MSI is fixed on the 6-DOF mechanical arm. The brand of the 6-DOF mechanical arm is LOBOT, and the model is MK12352. Twelve angle groups are measured from 5 deg to 60 deg. Every angle is measured 100 times by TSI or MSI, and then averaged. The rotation angle is measured as indicated by the red arrow in Fig. 16 and the results are shown in Table 6. From Table 6, it can be seen that the absolute error of the average angle of 12 groups is 0.36 deg for TSI and 0.28 deg for MSI, respectively. Table 6Rotation angle measurement result.

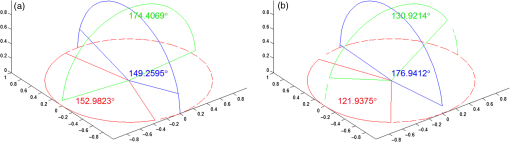

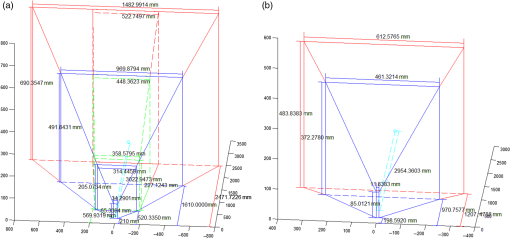

3.5.Tracking Angle and Area Range TestThe tracking angle view and area range of the proposed optical tracking system are shown in Figs. 17 and 18, respectively. Fig. 17The angle view range of the proposed optical tracking system. (a) The angle view range for TSI. (b) The angle view range for MSI.  Fig. 18The spatial measurement range of the proposed optical tracking system. (a) The spatial measurement range for TSI. (b) The spatial measurement range for MSI.  The angle view range is limited by the instrument on which there are only three markers. Rotation around the -axis ranges from to 74.6440 deg for TSI, and from to 53.9503 deg for MSI, which is the red area in Fig. 17. Rotation around the -axis ranges from to 88.1640 deg for TSI, and from to 89.0361 deg for MSI, which is the green area in Fig. 17. Rotation around the -axis ranges from to 75.04111 deg for TSI, and from to 73.4661 deg for MSI, which is the blue area in Fig. 17. The measurement of height and width depends on the distance in the -direction. In the -direction (from near to far), the common distance ranges from 219.0297 to 2577.6776 mm for TSI, from 198.5920 to 612.5765 mm for MSI, respectively. The occasionally farthest distance that can be measured is 3022.9473 mm for TSI and 2954.3603 mm for MSI, which is shown as the blue point in Fig. 18. The dashed line space is more precise than the solid line space, which can be measured with more instrument poses. Through the system experiment, the measurable range of the proposed optical tracking system is sufficient to meet the scope of surgical navigation. 3.6.Cost EstimationPolaris Spectra and Polaris Vicra system are popular commercial surgical tracking system, from Northern Digital Inc. (NDI) company. The prices are different for different types of the system, e.g., systems with active or passive markers. The cheapest is more than $31,340, the most expensive one is $52,496. The optical positioning system proposed in this paper mainly includes a 1394 card, binocular camera, LED marker, infrared filter, wires, proposed positioning software system, and so on. The cost estimation of the proposed optical tracking system is $3,366 as shown in Table 7. Table 7Cost estimation of proposed optical tracking system.

Taking into account the ratio between cost and commercialization, if the ratio is 3, the total price is $10,000. It substantially reduces the price compared with the existing commercial tracking systems. 4.DiscussionIn this paper, an optical tracking system based on a Bumblebee2 binocular camera is designed. An 850-nm near-infrared optical LED is used as the marker of surgical instruments. Meanwhile, an infrared filter is installed in front of the camera lens, which can effectively eliminate the interference of ambient light. In the space location algorithm, the RG method is designed to find the highlighted connected region of markers in the image. In order to eliminate the singular point, the pixel coordinate of the marker’s center is calculated by the GC method. With an optical tracking system, it is difficult to match the markers in a traditional way. A matching method for the 3-D reconstruction based on the position order of the markers is proposed, and the results are satisfying. In the end, the rotating surgical instrument method is used to calibrate the surgical instrument to obtain the position relationship between the tip and the SCS, resulting in tracking of the surgical instrument tip. In the stability experiment, 10 different positions are measured and calculated for the SDs of tip coordinates for TSI and MSI in the -, -, and -directions. The worst results of the two kinds of surgical instruments are 0.09708 and 0.09452 mm, respectively. The average value of SDs are 0.01799, 0.00783, 0.08112 mm in the -, -, -directions for TSI, and 0.01289, 0.00942, 0.06751 mm for MSI. For the - and -directions, it can be seen that the SD is very small. The SD is slightly larger in the -direction because the coordinate is also much larger than in the other two directions. From the CV results of the three directions, the same conclusion can be made. In the space distance experiment, the distance RMSE is 0.32 mm for TSI and 0.09 mm for MSI by Vernier caliper, 0.049 mm for TSI and 0.032 mm for MSI by grating ruler, respectively. The accuracy of Polaris Spectra and Polaris Vicra system of NDI company can achieve 0.25 mm RMS in pyramid.8 In the proposed optical tracking system, the measurable distance range is from 219.0297 to 2577.6776 mm for TSI, from 198.5920 to 612.5765 mm for MSI in -direction (from near to far). The occasionally farthest distance that can be measured is 3022.9473 mm for TSI and 2954.3603 mm for MSI, respectively. In the existing systems, the measurable distance ranges from 950 to 3000 mm for Polaris Spectra system, from 557 to 1336 mm for Polaris Vicra system in -direction. Because the surgical instruments are welded by us and are subject to a limitation because the luminescent marker is oriented in a single direction, the measurable area range of TSI and MSI is not as large as that of the Polaris Spectra system and Polaris Vicra system. However, the nearest measurable locations for TSI and MSI are better than Polaris Spectra and Polaris Vicra systems. It is advantageous for surgical operations that commonly have a small operating view. Through the system experiment, it can be seen that the proposed optical tracking system and the corresponding space tracking algorithm have high stability and accuracy. Moreover, comparing with the cost of the proposed optical tracking system and the existing systems, the price has been greatly reduced. 5.ConclusionsIn this paper, a low cost, open optical tracking system based on binocular vision theory is proposed. The system uses the stereo camera and combines with the self-developed image processing methods and easy-to-implement 3-D space tracking algorithm, which accomplishes the high accuracy of surgical instruments tracking. Experimental results show that the system has high stability and accuracy, and it can provide accurate guidance for surgical navigation. The system and the related algorithm are a meaningful exploration in the field of surgical navigation. Considering the practical application, it has room for improvement. For example, a battery can be used to supply power for the marker, so as to avoid the wire connected to the surgical instruments. The surgical instruments without wire can move more freely. In the future, we will design the power supply circuit for the surgical instruments to install the button batteries. The fast calibration algorithm needs further study. The algorithm of pixel coordinates extraction and the marker matching can also be optimized at the same time, so that the tracking accuracy of the system can be improved. The real-time synchronization needs to be further optimized to achieve higher system accuracy. At present, with more and more accurate operation requirements, the accuracy and stability of the optical tracking system needs continuous improvement. AcknowledgmentsThe authors would like to thank Mr. Hans Hermans for manuscript editing, Boqi Jia and Mengshi Zhang for the new revised experiment, Dr. Yu Wang for helping to buy the equipment of grating ruler and 6-DOF mechanical arm, and we are thankful for Zhang’s calibration method. This work was supported in part by the National Natural Science Foundation of China (No. 61672362 and 61272255), the Beijing Natural Science Foundation (No. 4172012), and the Scientific Research Common Program of Beijing Municipal Commission of Education (No. KM201710025011). ReferencesA. Wagner et al.,

“Image-guided surgery,”

Int. J. Oral Maxillofac. Surg., 25

(7), 147

–151

(1996). http://dx.doi.org/10.1016/S0901-5027(96)80062-2 IJOSE9 0901-5027 Google Scholar

F. A. Jolesz, Intraoperative Imaging and Image-Guided Therapy, Springer, New York

(2014). Google Scholar

M. Maybody, C. Stevenson and S. B. Solomon,

“Overview of navigation systems in image-guided interventions,”

Tech. Vasc. Interventional Radiol., 16

(3), 136

–143

(2013). http://dx.doi.org/10.1053/j.tvir.2013.02.008 Google Scholar

L. Appelbaum et al.,

“Image-guided fusion and navigation: applications in tumor ablation,”

Tech. Vasc. Interventional Radiol., 16

(4), 287

–295

(2013). http://dx.doi.org/10.1053/j.tvir.2013.08.011 Google Scholar

S. J. Phee and K. Yang,

“Interventional navigation systems for treatment of unresectable liver tumor,”

Med. Biol. Eng. Comput., 48

(2), 103

–111

(2010). http://dx.doi.org/10.1007/s11517-009-0568-3 Google Scholar

F. Kral et al.,

“Comparison of optical and electromagnetic tracking for navigated lateral skull base surgery,”

Int. J. Med. Rob. Comput. Assist. Surg., 9

(2), 247

–252

(2013). http://dx.doi.org/10.1002/rcs.v9.2 Google Scholar

Claron Technology Inc., “Microntracker[EB/OL],”

(2015) http://www.claronav.com/microntracker/ September ). 2015). Google Scholar

Northern Digital Inc., “Polaris optical tracking systems[EB/OL],”

(2015) http://www.ndigital.com/medical/products/polaris-family/ September ). 2015). Google Scholar

Medtronic Inc., “Stealth station S7 surgical navigation system[EB/OL],”

(2015) http://www.medtronic.com/for-healthcare-professionals/products-therapies/spinal/surgical-navigation-imaging/surgical-navigation-systems/systems-software-instruments/index.htm September ). 2015). Google Scholar

Z. Zhang,

“A flexible new technique for camera calibration,”

IEEE Trans. Pattern Anal. Mach. Intell., 22

(11), 1330

–1334

(2000). http://dx.doi.org/10.1109/34.888718 Google Scholar

“Camera calibration toolbox for Matlab[EB/OL],”

(2015) http://www.vision.caltech.edu/bouguetj/calib_doc/ September ). 2015). Google Scholar

Point Gray Research Inc., “Bumblebee2 1394a[EB/OL],”

(2015) http://www.ptgreychina.com//bumblebee2-firewire-stereo-vision-camera-systems September ). 2015). Google Scholar

Y. Reibel et al.,

“CCD or CMOS camera noise characterization,”

Eur. Phys. J. Appl. Phys., 21

(01), 75

–80

(2003). http://dx.doi.org/10.1051/epjap:2002103 Google Scholar

J. W. Yi and J. H. Oh,

“Estimation of depth and 3D motion parameter of moving object with multiple stereo images,”

Image Vision Comput., 14

(7), 501

–516

(1996). http://dx.doi.org/10.1016/0262-8856(95)01073-4 IVCODK 0262-8856 Google Scholar

U. Dhond and J. Aggarwal,

“Structure from stereo-a review,”

IEEE Trans. Syst., Man, Cybern., 19 1489

–1510

(1989). http://dx.doi.org/10.1109/21.44067 Google Scholar

C. C. Slama, C. Theurer and S. W. Henriksen, Manual of Photogrammetry, American Society of Photogrammetry, Virginia

(1980). Google Scholar

J. Heikkila and O. Silvén,

“A four-step camera calibration procedure with implicit image correction,”

in Proc. IEEE Computer Society Conf. on Computer Vision and Pattern Recognition,

1106

–1112

(1997). http://dx.doi.org/10.1109/CVPR.1997.609468 Google Scholar

B. Sun et al.,

“Surgical instrument recognition and calibration for optical tracking system,”

in IEEE Int. Conf. on Robotics & Biomimetics,

1376

–1381

(2010). http://dx.doi.org/10.1109/ROBIO.2010.5723530 Google Scholar

BiographyZhentian Zhou was a graduate student from 2013 to 2016 with the Biomedical Engineering Department, Capital Medical University School, Beijing, China, and received his master’s degree in 2016. He is now an engineer of the First College of Clinical Medical Science, China Three Gorges University, Yichang, China. His research interests include optical tracking, image guided surgery, and medical image processing. Bo Wu received her PhD from the Institute of Computing Technology, Chinese Academy of Science, Beijing, China, in 2010. She is now an associate professor of the School of Biomedical Engineering, Capital Medical University, Beijing, China. Her research interests include surgical navigation, medical image processing, and three-dimensional video application in telemedicine. Juan Duan received her MS degree in computer science and technology from the College of Computer Science and Technology, Beijing University of Technology, Beijing, China, in 1997. She is now an associate professor of the Faculty of Information Technology, Beijing University of Technology, Beijing, China. Her research interests include image processing, computer graphics, and computer vision. Xu Zhang received his MS degree in biophysics from the Institute of Biophysics, Chinese Academy of Sciences. He is now an associate research fellow of the Institute of Biophysics, Chinese Academy of Sciences. His research interests include biochemical and molecular mechanisms of biosensor, biodetection sensor technology, and neurobiology of vision. Nan Zhang received her PhD from the College of Computer Science and Technology, Beijing University of Technology, Beijing, China, in 2006. From 2007 to 2009, she was a postdoctorate of the Institute of Digital Media, Peking University, Beijing, China. She is now an associate professor of the School of Biomedical Engineering, Capital Medical University, Beijing, China. Her research interests include surgical navigation, medical image processing, and three-dimensional video application in telemedicine. |

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||